Extending the WING Ecosystem for Real-Time, Privacy-First Mobility

Role

UX/UI Designer

Duration

3 months

Industry

Health & Fitness

Overview

This project extends the WING HMI ecosystem, originally designed to centralize vehicle control into a streamlined, driver-first platform. With the rise of embedded AI in mobility systems, the next evolution the WING Intelligence Platform focuses on enabling real-time, privacy-respecting AI at the edge, right inside the vehicle.

Our goal: Eliminate cloud dependency for mission-critical decisions and give users full control over their data and AI behavior.

Problem

Smart vehicles are increasingly AI-powered, offering predictive navigation, automated alerts, and conversational assistance. However, these systems rely heavily on cloud computation, causing latency for split-second decisions. Worse, users lack visibility or control over how their driving data is collected or processed eroding trust.

The challenge: How might we design a real-time HMI system powered by edge AI that prioritizes both performance and user data sovereignty?

Proposed Solution

Design Principles

These insights shaped our core product principles used to guide both system architecture and interface behaviors:

Transparency

Clearly communicate how AI decisions are made and what data is used.Control

Give users intuitive toggles to manage AI training, cloud syncing, and data usage.Efficiency

Ensure interfaces reduce scan time and cognitive effort — especially while driving.

Core Architectural Principle:

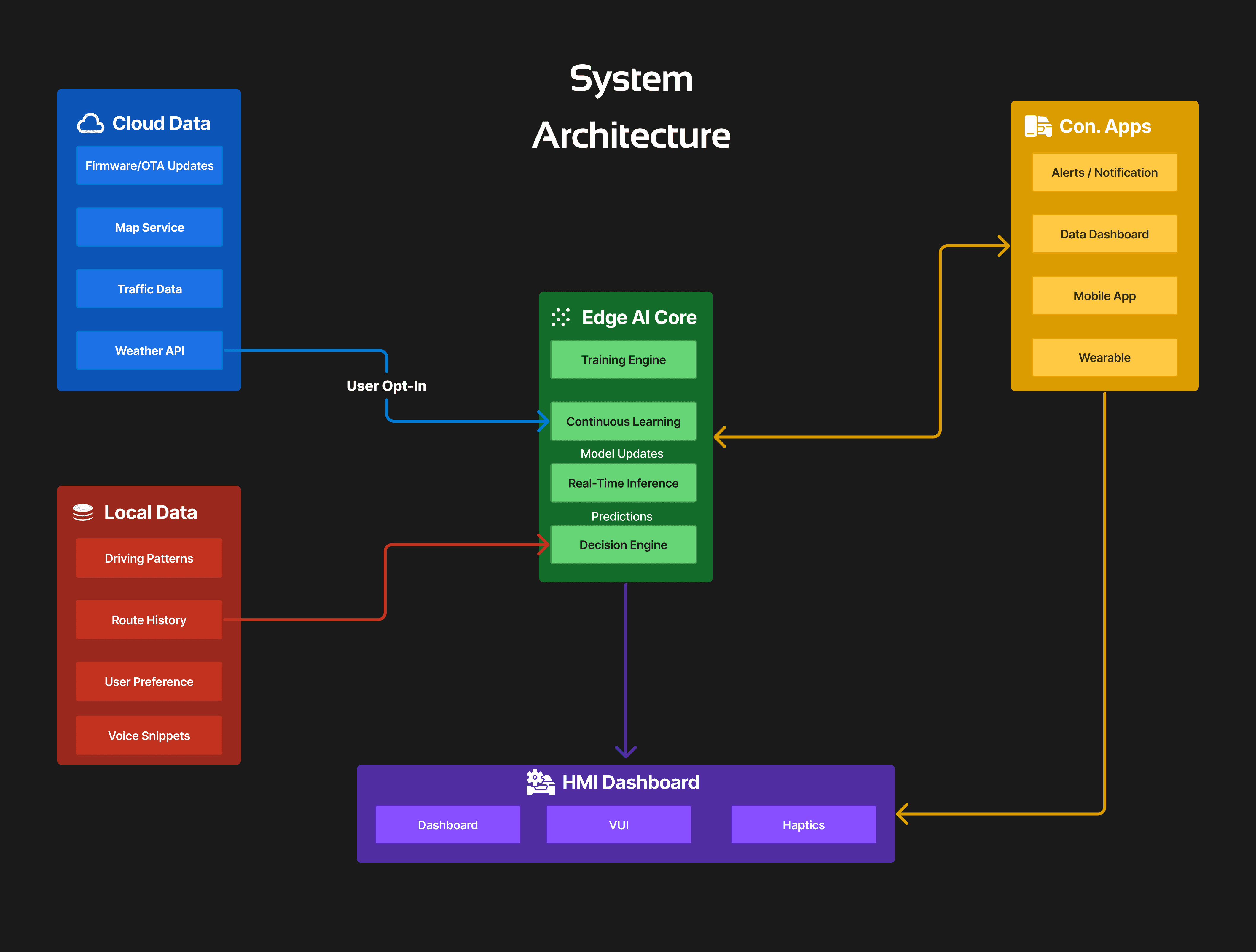

The WING Intelligence Platform is designed as a layered HMI system that distinguishes between:

Sensitive, Locally Trained Data

(e.g., driving behavior, routes, voice interactions)Generalized, Cloud-Synced Data

(e.g., firmware updates, public map data)

By embedding edge AI inference directly into the vehicle’s onboard system, the platform guarantees real-time responsiveness while retaining privacy with all training, inference, and decision-making happening locally unless explicitly opted into cloud features.

The system is designed to sync with companion mobile and wearable apps for zero-latency alerts, haptic feedback, and data review outside the vehicle.

Core User Flows